Health warning: this is quite a long posting and describes ideas for work that has not yet been undertaken.

My previous post gave a broad introduction to what we are doing and why. This one explores the application of text mining after a brief introduction to text mining techniques in the context of a search for possible weak signals. The requirements, in outline, are that the technique(s) to be adopted should:

- consume diverse sources to compensate for the surveillance filter;

- strip out the established trends, memes etc;

- not embed a-priori mental models;

- discriminate relevance (reduce “noise”).

Furthermore, the best techniques will also satisfy aims such as:

- replicability; they should be easily executed a number of times for different recorded source collections and longitudinally in time.

- adoptability; it should be possible for digitally literate people to take up and potentially modify the techniques with only a modest investment in time to understand topic mining techniques.

- intelligibility; what it is that the various computer algorithms do should be communicable in language to an educated person.

- parsimony; the simplest approach that yields results (Occam’s Razor) is preferred.

- flexibility; tuning, tweaking etc and an exploratory approach should be possible.

- subjectible to automation; once a workable “machine” has been developed we would like to be able to treat it as a black box either with simple inputs and outputs or that can be executed at preset time intervals.

The approach taken will be less elaborate than the software built by Klerx (see “An Intelligent Screening Agent to Scan the Internet for Weak Signals of Emerging Policy Issues (ISA)“) but his paper has influenced my thinking.

Finally: this is not text mining research and the findings of importance are about technology enhanced learning.

Data Mining Strategies

Text mining is not a new field and is one which has rather fuzzy boundaries and various definitions. As far back as 1999, Hearst considered various definitions (“Untangling Text Data Mining“) in what was then a substantially less mature field. As the web exploded, more applications and more implied definitions have become apparent. I will not attempt to create a new definition nor to adopt someone else’s, although Hearst’s conception of “a process of exploratory data analysis that leads to the discovery of heretofore unknown information, or to answers to questions for which the answer is not currently known” nicely captures the spirit.

The methods of data mining, of which text mining is a part, can be crudely split into two:

- methods based on an initial model and the deduction of the rules or parameters that fit the data to the model. The same strategy is used to fit experimental data to a mathematical form in least squares fitting, for example to an assumed linear relationship.

- methods that do not start with an explicit model but use a more inductive approach.

Bearing in mind our requirement to avoid a priori mental models, an inductive approach is clearly most appropriate. Furthermore, to adopt a model-based approach to textual analysis is a challenging task involving formal grammars, ontologies, etc and would fail the test of parsimony until other approaches have been exhausted. Finally: even given a model-based approach to text analysis it is not clear that models of the space in which innovation in technology enhanced learning occurs are tractable; education and social change are deeply ambiguous, fuzzy and relevant theories are many, unvalidated and often contested. The terms “deductive” and “inductive” should not be taken too rigidly, however, and I am aware that they may be applied to different parts of the methods described below.

Inductive approaches, sometimes termed “machine learning“, are extensively used in data mining and may again be subjected to a binary division into:

- “supervised” methods, which make use of some a priori knowledge, and

- “unsupervised methods which just look for patterns.

Supervision should be understood rather generally; the typical realisation of supervision is the use of a training set of data that has previously been classified, related to known outcomes or rated in some way. The machine learning then involves induction of the latent rules by which new data can be classified, outcomes predicted etc. This can be achieved using a range of techniques and algorithms, such as artificial neural networks. A supervised learning approach to detecting insurance fraud might start with data on previous detected cases to learn the common features. A text mining example is the classification of the subject of a document given a training set of documents with known subjects.

Unsupervised methods can take a range of forms and the creation of new ones is a continuing research topic. Common methods use one of several definitions of a measure of similarity to identify clusters. Whether or not the algorithm divides a set in successive binary splits, aggregates into overlapping or non-overlapping clusters. etc will tend to give slightly different results.

From this synopsis of inductive approaches it seems like we do not have an immediately useful strategy to hand. By definition, we do not have a training set for weak signals (although it could be argued that there are latent indicators of a weak signal and that we would gain some insight by looking at the history of changes that were once weak signals). The standard methods are oriented towards finding patterns, regularities, similarities, making predictions given previous patterns, which are not weak signals by definition.

For discovery of possible weak signals, it appears that we need to look from the opposite direction: to find the regularities so that they can be filtered out. Another way of expressing this is to say that it is outliers that will sometimes contain the information we most value, which is not usually the case in statistics. A concise description of outliers from Hawkins is sensible to our weak signals work as it is to general statistics: “an observation that deviates so much from other observations as to arouse suspicion that it was generated by a different mechanism” (Identification of outliers / D.M. Hawkins ISBN:041221900X). Upon this, a case for exclusion of outliers in a statistical treatment may often be built whereas for us it is a pointer to a subject for further exploration, dissemination or hypothesis.

Actually, it is likely to be more subtle than simply filtering out regularities and to require some cunning in the design of our mining process. I will describe some ideas later. Some of these describe a process that will filter out the largest regularities while retaining just-detectable ones; maybe a larger dataset than a human can reasonably absorb will show these up. Others will look at differences in the regularities between different domains. Following from this, it is not the case that finding regularities is bad, rather that it may be necessary to stray a little from normal practice, although borrowing as much as possible. “Two Non-elementary Techniques”, below, briefly outlines two relevant approaches to finding structure.

Ensembles

It should be quite clear that any kind of search for potential weak signals is beset by indeterminacy inherent in the socio-technical world in which they arise. I contend that any approach to finding such signals is also easily challenged over reliability and validity. Referring back to “Weak Signals and Text Mining I”, the TELMap human sources (Delphi based) and recorded sources (text mining based) will do the best they can but neither will be able to mount a robust defence of any potential weak signal except in retrospect. This is why we say “potential” and emphasise that discourse over such signals is essential.

One way of mitigating this problem is to take inspiration from the use of “ensembles” in modeling and prediction in complex systems such as the weather. The idea is quite simple; use a range of different models or assumptions and either take a weighted average or look for commonality. The assumption is that wayward behaviour arising from approximations and assumptions, which are practically necessary, can be caught.

A slightly different perspective on dealing with indeterminacy is expressed by Petri Tapio in “Disaggregative policy Delphi Using cluster analysis as a tool for systematic scenario formation” (Technological Forecasting & Social Change 70 (2002) 83 – 101):

“Henrion et al. go as far as suggesting to run the analysis with all available clustering methods and pick up the one that makes sense. From the standard Popperian deductionist point of view adopted in statistical science, this would be circular reasoning. But from the more Newtonian inductionist point of view adopted in many technical and qualitatively oriented social sciences, experimenting [with] different methods would also seem as a relevant strategy, because of the Dewian ‘learning by doing’ background philosophy.”

The combination of these two related ideas will be adopted:

- Bearing in mind the risk of re-introduction of the “mentality filter” (see part I), various methods and information sources will be experimented with to look for what “makes sense”. In an ideal scenario, several people with different backgrounds would address the same corpus to compensate for the mentality filter of each.

- Cross-checking between the possible weak signals identified in the human and recorded sources approaches and between text mining results (even those that don’t “make sense” by themselves) will be undertaken to look for more defensible signals by analogy with ensemble methods.

Having a human in the process – seeing what makes sense – should help to spot juxtaposition of concepts, dissonance to context, … etc as well as seeing when the method just seems to be working. It will also help to eliminate false-positives, e.g. an apparently new topic might actually be a duplicated spelling mistake.

A Short Introduction to Elementary Text Mining Techniques

The starting point, whatever specific approach is adopted, will always be to process some text, which will be referred to as a “document” whether or not this term would be used colloquially, to generate some statistics upon which computation can occur. These statistics might be quite abstract measures used to assess similarity or they might be more intelligible. On the whole, I prefer the latter since the whole point of the work is to find meaning in dis-similarity. The mining process will consider a large number, where “large” may start in the hundreds, of documents in a collection from a particular source. The term “corpus” will be used for collections like this.

I will be drawing from standard toolkit of text processing to get from text to statistics, comprising the separate operations described below. These are “elementary” in the sense that they don’t immediately lead us to useful information. They are operations suited to a “bag of words” treatment, which seems quite crude but is common practice; it has been shown to be good enough for a many applications, it is computationally tractable with quite large corpora and it lends itself to relatively intelligible results. In “bag of words“, the word order is almost totally neglected and there is no concept of the meaning of a sentence. The meaning of a document becomes something that is approached statistically rather than through the analysis of the linguistic structure of sentences. Bag-of-words is just fine for our situation as we don’t actually want the computer to “understand” anything and we do want to apply statistical measures over moderate-sized corpora.

“Stop Word” Removal

Some of the words used in a document indicate little or nothing of what the document is about in a bag-of-words treatment, although they may be highly significant in a sentence. “No” is a good example of a word with different significance at sentence and bag-of-words levels. It is easy to call other examples to mind: or, and, them, of, in… In the interest of processing efficiency and the removal of meaningless and distracting indicators of similarity/difference, stop words should be removed at an early stage rather than trying to filter out what they cause later in the process. Differences in the occurrence of stop words can be considered to be 100% “noise” but they are easily-filtered out at an early stage. Standard stop-word lists exist for most languages and are often built into software for text mining, indexing, etc. It is possible that common noise-words will be discovered while looking for possible weak signals but these can be added to the stop-list.

Tokenisation

Tokenisation involves taking a stream of characters – letters, punctuation, numbers – and breaking it up into discrete items. These items are often what we would identify as words but they could be sentences, fixed length chunks or some other definable unit. Sometimes so-called “n-grams” are created in tokenisation. I will generally use single word tokens but some studies may include bi-grams or tri-grams. For example, all of the following might appear as items in a bag-of-words using bi-gram tokenisation: “learning”, “design”, “learning design”.

Part-of-Speech Tagging and Filtering

For the analysis of meaning in a sentence, the tagging of the part of speech (POS) of each word is clearly important. For the bag-of-words text mining it will not be so. I expect to use POS tagging in only a few applications (see below). When used, it will probably be accompanied by a filtering operation to limit the study to nouns or various forms of verb (VB* in the Penn Treebank POS tags scheme) in the interest of relevance discrimination.

Stemming

Many words change according the part of speech and have related forms but which effectively carry similar meaning in a bag of words. For example: learns learner, learning, learn. This will generally equate to “noise”, at best a minor distraction and at worst something that hides a potential weak signal by dissipating a detectable signal concept into lexically-distinct terms. In general it is statistically-desirable to reduce the dimensionality of variables, especially if they are not independent, and stemming does this since each word/term occurrence is a variable.

The standard approach is “stemming”, which reduces related words to what is generally the starting part of all of the related words (e.g. “learn” but it often leads to a stem that is not actually a word). There are a variety of ways this can be done, even within a single language. The Porter stemmer is widely used for English.

Document Term Statistics

A simple but practically-useful way to generate some statistics is to count the occurrence of terms (words or n-grams having been filtered for stop-words and stemmed). A better measure, which compensates for the differences in document length is to use the term frequency rather than the count; term frequency may be expressed as a percentage of the terms occurring in the document that are of a given type. Sometimes a simple yes/no indicator of occurrence may be useful.

A potentially more interesting statistic for a search for possible weak signals is the “term frequency inverse document frequency” (td-idf). This opaquely-named measure is obtained by dividing the term frequency by the logarithm of the fraction of documents that contain the term. This elevates the measure if the term is sparsely distributed among documents, which is exactly what is needed to emphasise outliers.

Given one of these kinds of document item statistic it is possible to hunt for some interesting information. This might involve sorting and visually-reviewing a table of data, resort to a graphical presentation, some ad-hoc recipe, use of a structure-discovery algorithm (e.g, clustering) that computes a “distance measure” between documents from the item statistics, … or a combination of these.

Synonyms, Hyponyms, …

For the reasons outlined in the section on stemming, it can be helpful to reduce the number of terms by mapping several synonyms onto one term or hyponyms onto their hypernym (e.g. scarlet, vermilion, carmine, and crimson are all hyponyms of red). The Wordnet lexical database contains an enormous number of word relationships, not just synonyms and hypo/hyper-nyms. I do not intend to adopt this approach, at least in the first round of work, as I fear that it will be hard to be a master of the method rather than the reverse. For example, wordnet makes imprinting be a hyponym of learning – “imprinting — (a learning process in early life whereby species specific patterns of behavior are established)” – which I can see as a linguistically sensible relationship but one with the unintended consequence of suppressing potential weak signals.

An alternative use of Wordnet (or similar database) would be to expand one or more words into a larger set. This might be easier to control and would quickly generate what could be used as a set of query terms, for example to search for further evidence once a possible weak signal shortlist has been created. One of my “Application Scenarios”, below, proposes the use of expansion.

Two Non-elementary Techniques

The section “Data Mining Strategies” outlined some of the strengths and weaknesses of common approaches in relation to our quest for possible weak signals. It stressed the need to work with methods that focus on finding structure and regularities alongside a search for outliers. Two relevant techniques that go beyond the relative simplicity of the elementary text mining techniques outlined above are clustering and topic modeling. “Topic modeling” is a relatively specific term whereas “clustering” covers more diversity.

Clustering methods – many being well-established – may be split into three categories: partitioning methods, hierarchical methods and mapping methods. These usually work by computing a similarity (or distance between) the items being clustered. In our case it is the document term statistics that will give a location for each document.

The hierarchical approach is expected to make visible the most significant structure viewed from the top down (although the algorithms work from the bottom up in “aggregative” hierarchical clustering) and so not to lend itself to a search for possible weak signals although it is appropriate for scientific studies and for document subject classification where we naturally use hierarchical taxonomies.

Partitioning does not coerce the structure into a hierarchy and so may be expected to leave more of the detail in place. There are a number of different objectives that may be chosen for partitioning and potentially several algorithms for each. The “learning by doing” philosophy noted in the section “Ensembles” will be adopted. An old but useful description of the mathematics of some established clustering algorithms has been provided by Anja Struyf, Mia Hubert, Peter Rousseeuw in the Journal of Statistical Software Vol 1 Issue 4.

Hierarchical and partitioning approaches have been popular for many years whereas mapping approaches are a more recent innovation, probably due to greater computing power requirements. Self Organising Maps and Multi-dimensional scaling are two common “mapping approaches”. They are probably best understood by thinking of the problem of representing the document term statistics (a table with many columns, each representing the occurrence of terms, and many rows, one for each document) in two dimensions. This is clustering in the sense that aspects of sameness among the ‘n’ dimensions of the columns are aggregated. Although this process of dimension-reduction has the desirable property of making the data more easy to visualise, it is may be unsuitable for the discovery of possible weak signals.

Clustering is a stereotype unsupervised learning approache; there is no embedded model of the data. All that is needed is a means to compute similarity, hence the same methods can be applied to experimental data, text etc. Topic Modeling, however, introduces a theory of how words, topics and documents are related. This has two important consequences: the results of the algorithm(s) may be more intelligible; the model constrains the range of possible results. The latter may be either desirable or undesirable depending on the validity of the model. Intelligibility is improved because we are able to better relate to the concept of a topic than we are to some abstract statement of statistical similarity.

Probabalistic Topic Models (see Steyvers and Griffiths, pdf) assume a bag of words, each word having a frequency value, can be used to capture the essence of a topic. A given document may cover several topics to differing degrees leading to a document term frequency in the obvious way. The statistical inference required to work backwards from a set of documents to a plausible set of topics and the generation of the word frequency weightings for each topic and topic percentages in each document requires some mathematical cunning but has been programmed for R (see Grün and Hornik topicmodels package) as well as MATLAB (see Mark Steyvers’ toolbox).

Probablistic Topic Models could be useful to attenuate some of the noisiness expected with approaches working purely at the document-term level. This might make identification of possible weak signals easier; “discriminate relevance” was how it was phrased in the statement of requirements above. It is expected that some tuning of parameters, especially the number of topics, will be required. There is also a random element in the algorithms employed. This means that the results between different runs may be different. The margin of the stable part of the results may well contain pointers to possible weak signals. As for clustering, a “learning by doing” approach will be used, taking care not to introduce a mentality filter.

Sources of Information for Mining

One of the premises of text mining is access to relatively large amounts of information and the explosion of text on the web is clearly a factor in an increasing interest in text mining over the last decade and a half. There are both obvious and non-obvious reasons why an unqualified “look on the web” is not an answer to the question “where should I look for possible weak signals”. Firstly, the web is simply too mindbogglingly big. More subtly, it is to be expected that differences in style and purpose of different pieces of published text would hide possible weak signals; some profiling will be required to create corpora that contain comparable documents within each. Finally, crawling web pages and scraping out the kernel of information is a laborious and unreliable operation when you consider the range of menus, boilerplate, advertisements, etc that typically abound.

Three kinds of source get around some of these issues:

- blogs occur with a reduced range of style and provide access to RSS feeds that serve-up the kernel of information as well as publication date;

- journal abstracts generally have quite a constrained range of style, are keyword-rich and can be obtained using RSS or OAI-PMH to avoid the need for scraping;

- email list archives are not so widely available as RSS (but this is sometimes available) and there is often stylistic consistency, although quoted sections, email “signatures” and anti-spam scan messages may present material in need of removal.

My focus will be on blogs and journal abstracts, which are expected to generally contain different information. RSS and OAI-PMH are important methods for getting the data with a minimum of fuss but are not the panacea for all text acquisition woes. RSS came out of news syndication and to this day RSS feeds serve up the most recent entries. Any attempt to undertake a study that looks at change over time using RSS to acquire the information will generally have to be conducted over a period of time. Atom, a later and similar feed grammar, is sometimes available but not the paging and archival features imagined in RFC5005. Even RSS feeds provided by journal publishers are limited to the latest issue and usually no obvious means to hack a URL to recover abstracts from older issues. The OAI-PMH provides a means to harvest abstract (etc) information over a date range and there is even an R package that implements OAI-PMH but many publishers do not offer OAI-PMH access.

A final problem which is specific to blogs is how to garner the URLs for the blogs to be analysed. It seems that all methods by which a list could be compiled are flawed; the surveillance and mentality filters seem unavoidable.

The bottom line is: there will be some work to do before text mining can begin.

On Tools, Process and Design…

The actual execution of the simple text mining approaches outlined in the “short introduction” is relatively straight-forward. There are several pieces of software, both code libraries/modules and desktop applications, that can be used after a little study of basic text mining techniques. I plan on using R and the “tm” package (Feinerer et al) for the “elementary” operations and RapidMiner. The former requires programming experience whereas the latter offers a graphical approach similar to Yahoo Pipes. In principle, the software used should be independent of the text mining process it implements, which can be thought of in flow-chart terms, so long as standard algorithms (e.g. for stemming) are used. In practice, of course, there will be some compromises. The essence of this is that the translation from a conceptual to an executable mining process is an engineering one.

The critical, less-well-defined and more challenging task is to decide how the toolkit of text-mining techniques will be applied. This starts with considering what text sources will be used, moves through how to “clean” text and then to how tokenisation, term frequency weights etc will be used and concludes with how clustering (etc) and visualisation should be deployed. In a sense, this is “designing the experiment” – but I use the term “application scenario” – and it will determine the meanignfulness of the results.

Some Application Scenarios

This section speculates on a number of tactics that might yield possible weak signals. The title of application scenario will be used as its name. Future postings will develop and apply one or more of these application scenarios.

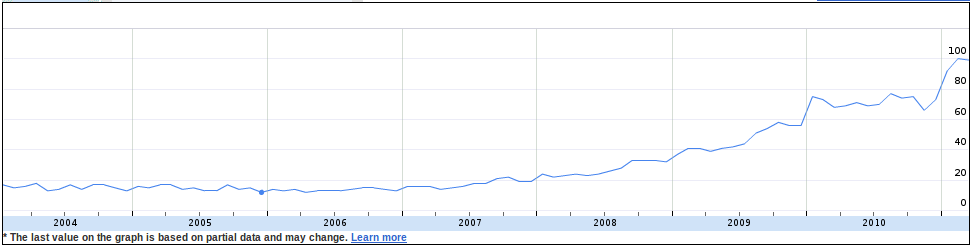

Out-Boundary Crossing

Idea: Strong signals in other domains are spotted by a minority in the TEL domain who see some relevance of them. Discover these.

Notes: The signal should be strong in the other domain so that there is confidence that it is in some sense “real”.

Operationalisation: Extract low-occurrence nouns from a corpus of TEL domain blog posts and cross-match to search term occurrence in Google Trends.

Challenges: Google Trends does not provide an API for data access

In-Boundary Crossing

Idea: Some people in another domain (e.g. built environment, architectural design) appear to be talking about teaching and learning. Discover what aspect of their domain they are relating to ours.

Notes: This approach clearly cannot work with text from the education domain.

Operationalisation: Use Wordnet to generate one or more sets of keywords relevant to the practice of education and training. Use a corpus of journal abstracts (separate corpora for different domains) and identify documents with high-frequency values for the keyword set(s).

Challenges: It may be difficult to eliminate papers about education in the subject area (e.g. about teaching built environment) other than by manual filtering.

Novel Topics

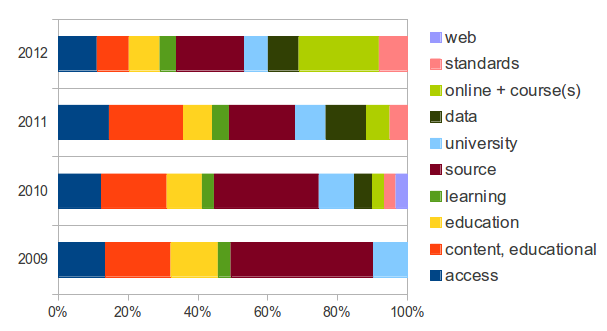

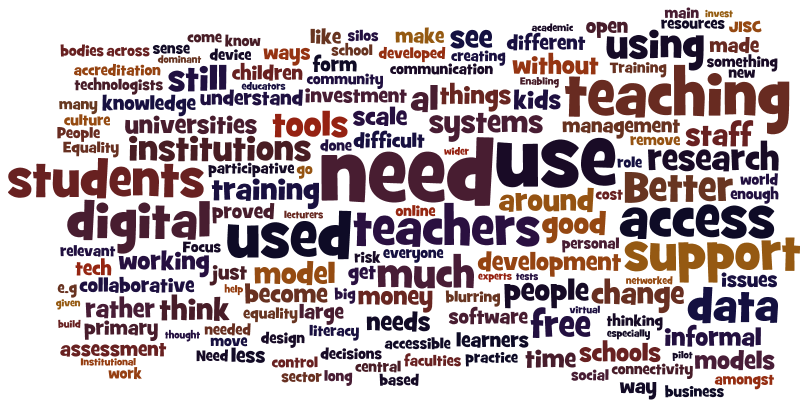

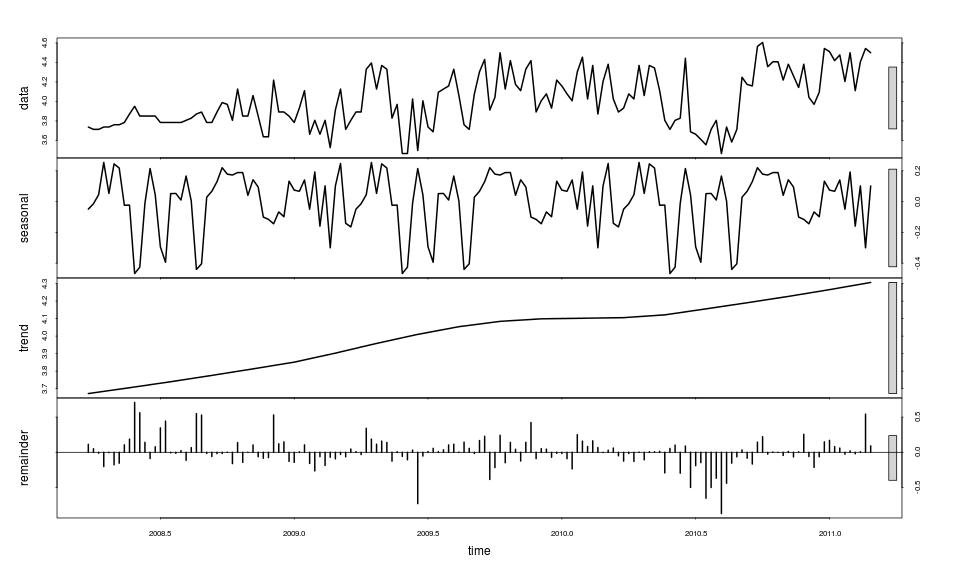

Idea: Detect newly emerging topics against a base-line of past years.

Notes: This is the naive application scenario, although there are many ways in which it can be operationalised.

Operationalisation: Against a corpus of several previous years, how do the topics identified by Probabilistic Topic Modeling correlate with those for the last 6 months or year. Both TEL blogs and TEL journal abstracts could be considered.

Challenges:It may be difficult to acquire historical text that is comparable – i.e. not imbalanced due to differences in source or due to quantity.

Parallel Worlds

Idea: There may be different perspectives between sub-communities within TEL: practitioners, researchers and industry. Identify areas of mismatch.

Notes: The mismatch is not so much a source of possible weak signals as an exploration of possible failure in what should be a synergy between sub-communities.

Operationalisation: Compare the topics displayed in email lists for institutional TEL champions, TEL journal abstracts and trade-show exhibitor profiles.

Challenges: Different communities tend to use communications in different ways: medium, style, etc, which is reflected in the different text sources. This may well over-power the capabilities of text mining. Web page scraping will be required for exhibitor profiles and maybe email list archives.

Rumble Strip

A “rumble strip” provides an alert to drivers when they deviate.

Idea: Discover differences between a document and a socially-normalised description of the same topic.

Notes: –

Operationalisation: Use an online classification services (e.g. OpenCalais) to obtain a set of subject “tags” for each document. Retrieve and merge the wikipedia entries relevant to each. Compare the document term frequencies for the original document and the merged wikipedia entries.

Challenges: Documents are rarely about a single topic; the practicability of this application scenario is slim.

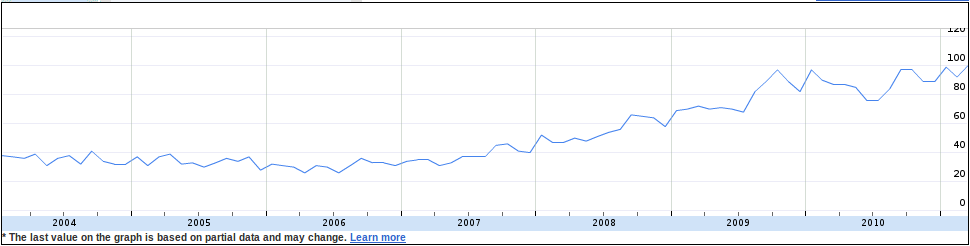

Ripples on the Pond

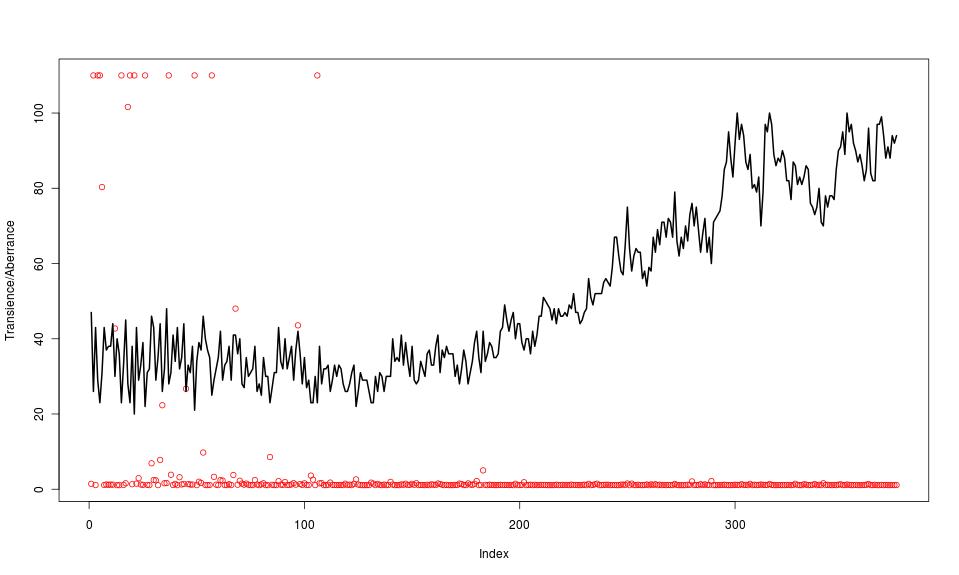

Idea: A new idea or change in the environment may lead to a perturbation in activity in an established topic.

Notes: Being established is key to filtering out hype; these are not new topics.

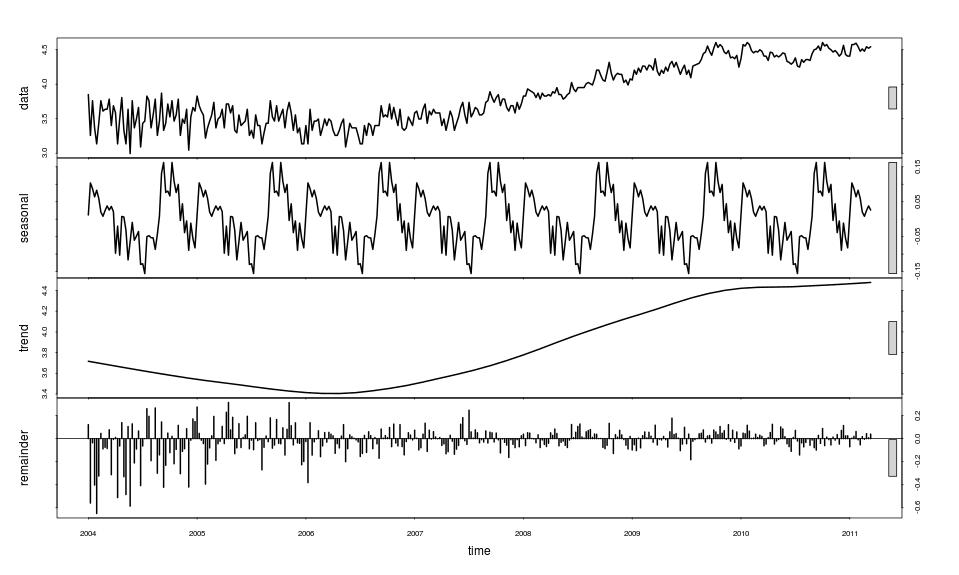

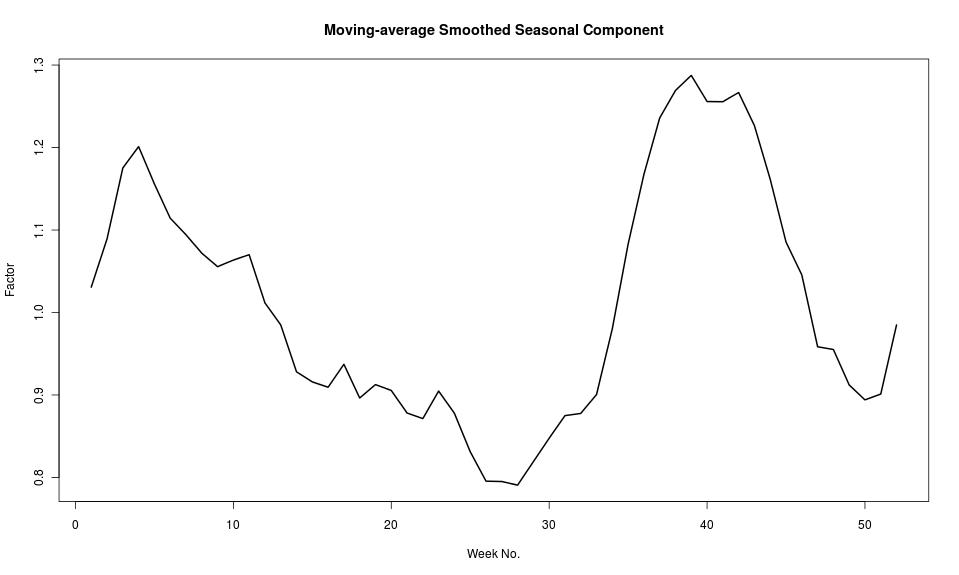

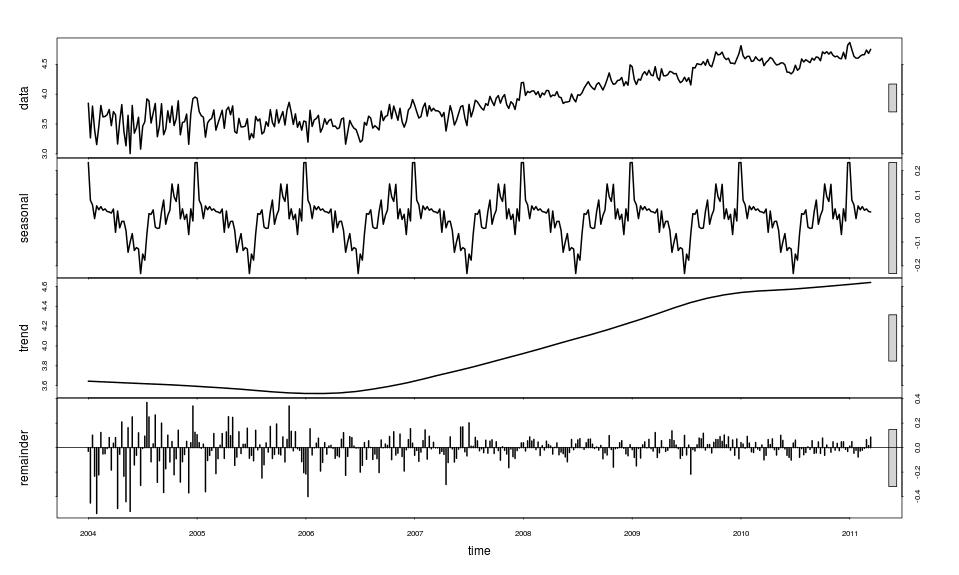

Operationalisation: Identify some key-phrase indicators for established topics (e.g. “learning design”). Mine journal abstracts for the key phrase and analyse the time series of occurrence. Use OAI-PMH sources to provide temporal data.

Challenges: The results will be sensitive to the means by which the investigated topics are decided.

Shifting Sands

Idea:Over time the focus within a topic may change although writers would still say they are talking about the same subject. Discover how this focus changes as a source of possible weak signals.

Notes: Although the scenario considers an aggregate of voices in each time period, the voices of individuals may be influential on the results.

Operationalisation: Use key-phrases as for “Ripples on the Pond” but use Probabilistic Topic Modeling with a small number of topics. Analyse the drift in the word-frequencies determined for the most significant topics.

Challenges: –

Alchemical Synthesis

Idea:Words being newly-associated may be early signs of an emerging idea.

Notes: –

Operationalisation: Using single-word nouns in the corpus, compute an uplift for n-grams that quantifies the n-gram frequency compared to what it would be by chance. Sample a corpus of TEL domain blog posts and look for bi-grams or tri-grams with un-expected uplift.

Challenges: –

Final Remarks

As was noted at the start, the implementation of these ideas is not yet undertaken. I may be rash in publishing such immature work but I do so in the hope that constructive criticism or offers of collaboration might arise.

There is much more that could be said about issues, challenges and what is kept out of scope but two warrant comment: I am only looking at text in English and recognise that this gives a biased set of possible weak signals; there are other analytical strategies such as social network analysis that provide interesting results both independently of and along side the kind of topic-oriented analysis I describe.

I hope to be able to report some possible weak signals in due course for comment and debate. These may appear on the TELMap site but will be signposted from here.