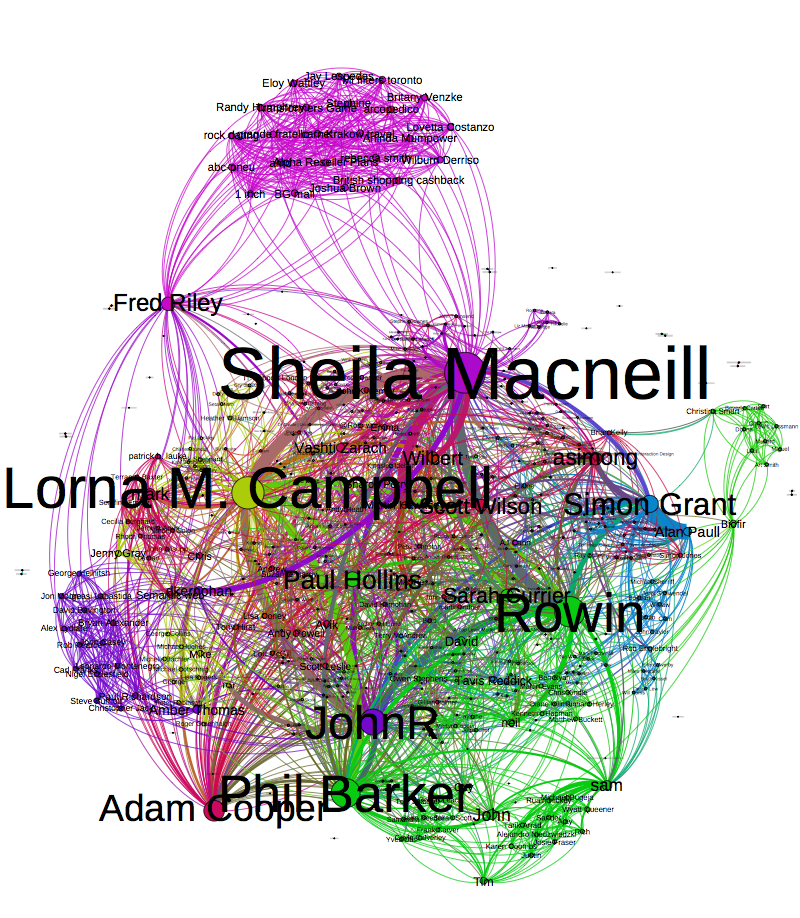

Whether you’re trying to flog wares, advertise consultancy skills or simply have a big ego; it seems that we all want to abuse social media for a cause. I find it interesting that we all choose to use different social media services and guess that our strategy depends on on many factors such as what it is we are sharing, the audiences we are trying to reach and ultimately the user base size of the social media service.

Yesterday Obama claimed the most popular Twitter and Facebook posts of all time with over 640 000 retweets and 3 million likes of a picture of him and his wife and it occurred to me that the two huge social media strategies; that of the U.S presidential candidates will be winding down.

With my social media strategy restricted to posting on Google Site’s and this very blog I thought it would be interesting to poke about and reflect on how the candidates things. I found much analysis of both Obama’s and Romney’s campaign already exists; here are some things I found interesting:

Email still plays a huge role

Ed Hallen did an analysis of both candidates email campaigns. The strategies of both candidates are quite complex, but it is clear that email plays a huge roll and there seem to be some important themes to the strategy.

• It matters who in the organisation sends the message.

Both campaigns restricted the times that messages appeared to come from the candidates themselves to more urgent emails. Other emails came from the VP-candidate or spouse.

• Subject matters

Emails from the Democrat camp were often punchy with a semi colon, which Ed claims was a tested way making people more likely to read the message. On the other hand the Republican camp used relaxed one word subjects. ‘Hey’ being the most common

* Know your audience

Having signed up to the lists, both camps know that they are preaching to the converted. It seems the list were more likely to be used for issues such as fund raising then trying to get new votes.

Obama on Reddit

Both sides had the obvious online presence: Twitter, Facebook, Youtube, Google + and Linked in but I was shocked to see Obama do an ‘Ask me Anything’ on my favourite news aggregation site 2 months ago; but in retrospect Reddit is the perfect choice.

• Targeting an audience on the edge

Reddit often comes under attack for suffering from group think popular opinions voted to the top with disagreements being voted down and often deleted. The demographic of Reddit in the U.S. is young males who lean towards the Democrats. This meant Obama could essentially reaching out to an audience who already supported him but were in a demographic not likely. The groupthink mentality would mean that arguments against his replies would not float to the top ( Plus his reference to internet memes gave him geek cred – Not Bad! ).

Social sites create their own analysis dashboards

While twitter was an obvious choice for both candidates I found it interesting that twitter worked with Topsy to create a dashboard to mine itself for data on the candidates and the topics surrounding them. I felt this was a sign that the service knows just how important the data it holds is and was a clear message to the world that if you want to win your cause you have to try and play its game. Twitter wasn’t the only service doing this, Microsoft’s example can be found here.

Mitt Romney on 4chan

(I kid)