The announcement of Google’s new Wave technology seems to be causing equal parts excitement and bafflement. For education, it’s worth getting through the bafflement, because the potential is quite exciting.

What is Google Wave?

There’s many aspects, and the combination of features is rather innovative, so a degree of blind-people-describing-an-elephant will probably persist. For me, though, Google Wave exists on two levels: one is as a particular social networking tool, not unlike facebook, twitter etc. The other is as a whole new technology, on the same level as email, instant messaging or the web itself.

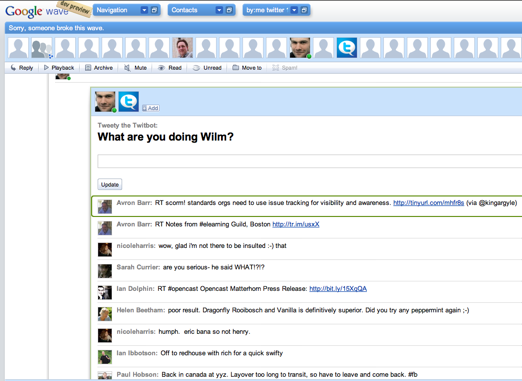

As a social networking tool, Wave’s brain, erm, ‘wave’ is that it focusses on the conversation as the most important organising principle. Unlike most existing social software, communication is not between everyone on your friends/buddies/followers list, but between everyone invited to a particular conversation. That sounds like good old email, but unlike email, a wave is a constantly updated, living document. You can invite new people to it, watch them add stuff as they type, and replay the whole conversation from the beginning.

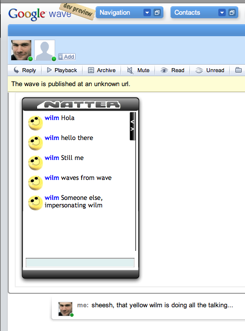

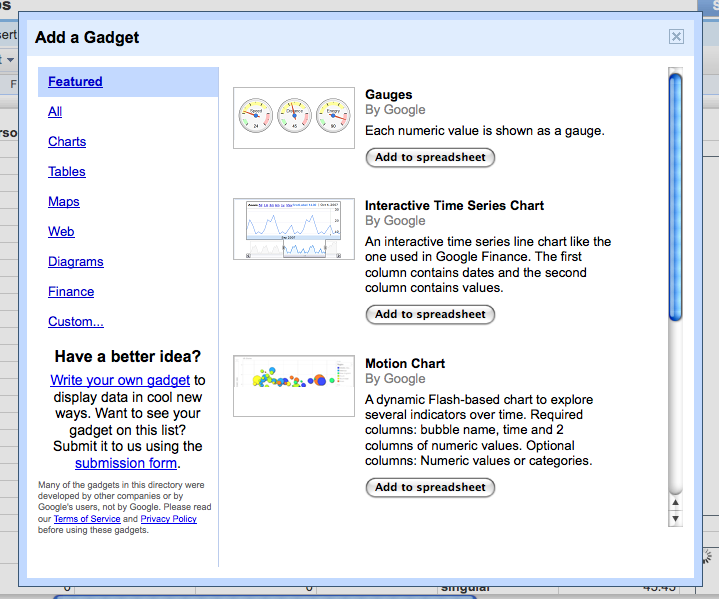

As a new technology, then, Google Wave turns every conversation (or ‘wave’ in Google speak) into a live object on the internet, that you can invite people and other machine services (‘robots’) to. The wave need not be textual, you can also collaborate on resources or interact with simple tools (‘gadgets’). Between them, gadgets and robots allow developers to bring in all kinds of information and functionality into the conversation.

The fact that waves are live objects on the internet points to the potential depth of the new technology. Where email is all about stored messages, and the web about linked resources, Wave is about collaborative events. As such, it builds on the shift to a ‘realtime stream’ approach to social interaction that is being brought about by twitter in particular.

The really exciting bit about Wave, though, is the promise that – like email and the web, and unlike most social network tools – anyone can play. It doesn’t rely on a single organisation; anyone with access to a server should be able to set up a wave instance, and communicate with other wave instances. The wave interoperability specifications look open, and the code that Google uses will be open sourced too.

Why does Wave matter for teaching and learning?

A lot of educational technology centres around activity and resource management. If you take a social constructivist approach to learning, the activity type that’s most interesting is likely to be group collaboration, and the most interesting resources are those that can be constructed, annotated or modified collaboratively.

A technology like Google Wave has the potential to impact this area significantly, because it is built around the idea of real time document collaboration as the fundamental organising concept. More than that, it allows the participants to determine who is involved with any particular learning activity; it’s not limited to those that have been signed up for a whole course, or even to those who where involved in earlier stages of the collaboration. In that sense, Google Wave strongly resembles pioneering collaborative, participant-run, activity focussed VLEs such as Colloquia (disclosure: my colleagues built Colloquia).

In order to allow learning activities to become independent of a given VLE or web application, and in order to bring new functionality to such web applications, Widgets have become a strong trend in educational technology. Unlike all these educational widget platforms (bar one: wookieserver), however, Wave’s widgets are realtime, multi-user and therefore collaborative (disclosure: my colleagues are building wookieserver).

That also points to the learning design aspect of Wave. Like IMS Learning Design tools (or LD inspired tools such as LAMS), Wave takes the collaborative activity as the central concept. Some concepts, therefore, map straight across: a Unit of Learning is a Wave, an Act a Wavelet, there are resources, services and more. The main thing that Wave seems to be missing natively is the concept of role, though it looks like you can define them specifically for a wave and any gadgets and robots running on them.

In short, with a couple of extensions to integrate learning specific gadgets, and interact with institutional systems, Waves could be a powerful pedagogic tool.

But isn’t Google evil?

Well, like other big corporations, Google has done some less than friendly acts. Particularly in markets where it dominates. Social networking, though, isn’t one of those markets, and therefore, like all companies that need to catch up, it needs to play nice and open.

There might still be some devils in the details, and there’s an awful lot that’s still not clear. But it does seem that Google is treating this as a rising platform/wave that will float all boats. Much as they do with the general web.

Will Wave roll?

I don’t think anyone knows. But the signs look promising: it synthesises a number of things that are happening anyway, particularly the trend towards the realtime stream. As with new technology platforms such as BBSs and the web in the past, we seem to be heading towards the end of a phase of rapid innovation and fragmentation in the social software field. Something like Wave could standardise it, and provide a stable platform for other cool stuff to happen on top of it.

It could well be that Google Wave will not be that catalyst. It certainly seems announced very early in the game, with lots of loose ends, and a user interface that looks fairly unattractive. The concept behind it is also a big conceptual leap that could be too far ahead of its time. But I’m sure something very much like Wave will take hold eventually.

Resources:

Google’s Wave site

Wave developer API guide. This is easily the clearest introduction to Wave’s concepts- short and not especially technical

Very comprehensive article on the ins and outs at Techcrunch